Welcome to my latest newsletter What ladidai Has to Say, where I discuss tunes, tech, and trends that interest me. I’m ladi and I’m glad you’re here.

I started writing this edition in late February. It is now late March. That gap is the story.

I rewrote this LinkedIn post five times on Friday, February 27. Today is March 23. Stay with me.

In the 24 days between then and now, the story kept moving. So did I. What you're about to read is the full arc: from one of the wildest 48-hour news cycles I've ever covered, to where we are right now, today, as I'm finally hitting send.

Let's get into it.

The part nobody's framing correctly

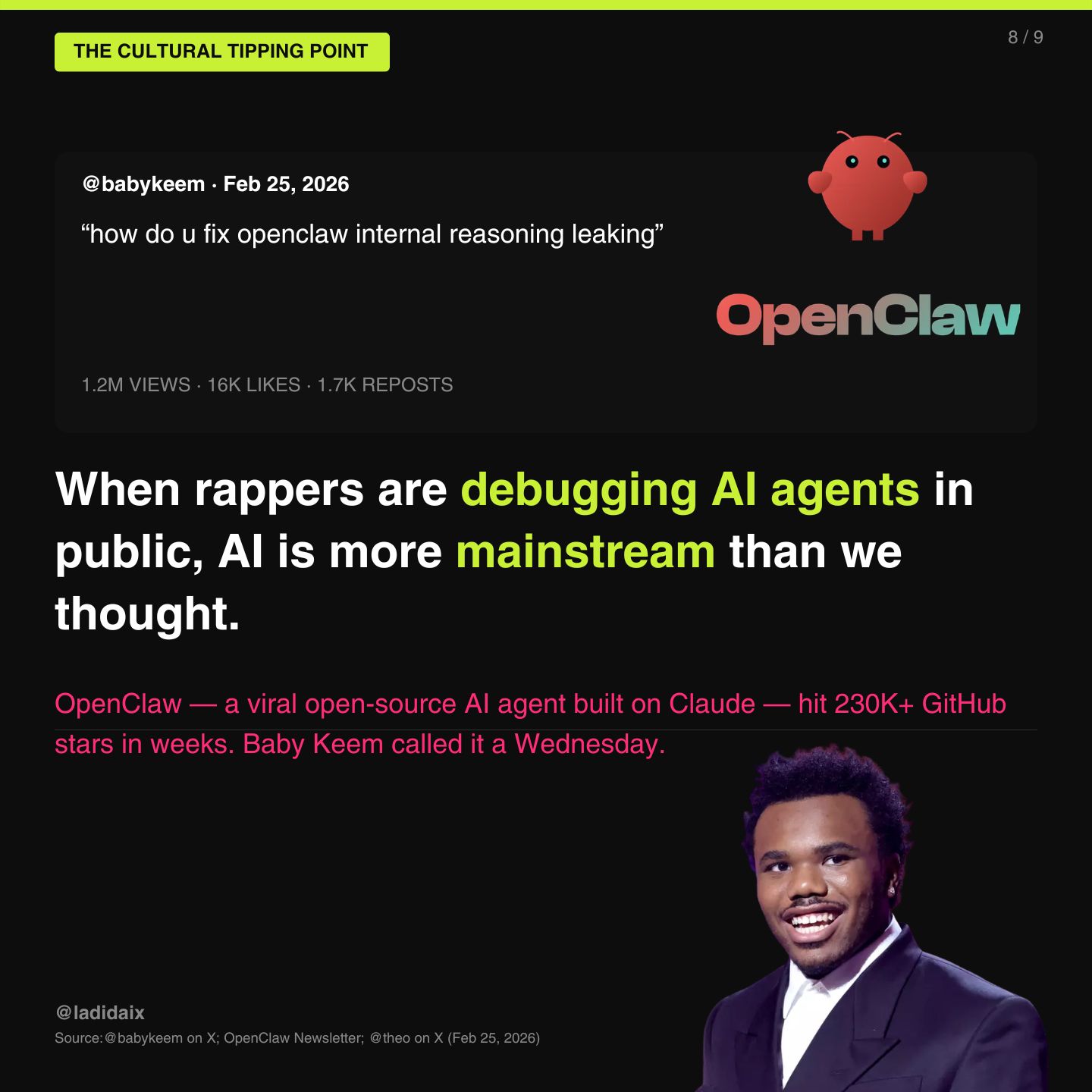

Before we get into the Pentagon drama — and we will — I want to start somewhere most outlets didn't: Wednesday, February 25th, when rapper Baby Keem posted on X:

"how do u fix openclaw internal reasoning leaking"

3.5 million views. 18,000 likes. From a Grammy-nominated artist casually debugging an open-source AI agent in public like it was a typical Tuesday, because for him it was.

OpenClaw — an open-source AI agent built on Claude — had just hit 230,000+ GitHub stars in a matter of weeks. It is not a mainstream consumer product. It is a developer tool. And one of the most culturally relevant artists of his generation was publicly troubleshooting it on social media.

I've written before about how you can track mainstream technology adoption not through tech press coverage, but through cultural osmosis — when the artists start talking about it without prompting, without sponsorship, without a press release. That's when something has truly arrived.

Baby Keem was not doing a brand deal. He was not on a panel at SXSW. He was just using the tool, hitting a problem, and asking the internet for help. The way you do when something is just part of your life.

Then, a couple weeks later, Meek Mill posted on X:

Yes. Palantir. The $384 billion Army surveillance contractor.

Within minutes, a Palantir Forward Deployed Architect replied with a direct signup link. "Here you go, lmk if you need help."

The sales machine never sleeps.

What makes this more than a funny Twitter moment is the context. Meek also recently joined LinkedIn where he's posting about wanting tech partnerships, about criminal justice reform, about wanting banks to invest in his music. He's actively trying to navigate these same systems we've been discussing. The intersection of surveillance tech, music industry economics, and criminal justice that Meek Mill embodies in his actual life is the same intersection this entire news cycle is about. He just didn't know he was posting into the middle of it.

Stay with me — there's a Meek update at the end of this piece that I need you to see.

And then there's Charlie Puth, who was just named Chief Music Officer of AI music platform Moises. Not running from AI, but creating with it. "AI is never going to wipe us off the planet creatively," he said. "We humans have to learn how to work with it."

Three artists. Three different relationships to the same technology. Baby Keem debugging agents. Meek Mill asking about surveillance software. Charlie Puth taking an executive role at an AI company. None of them are doing what the tech press predicted artists would do, which was either resist or be replaced.

AI has arrived. Not in the "ChatGPT launched" way. In the "rappers are sliding into Palantir's DMs" way. Those are different thresholds. The second one matters more.

Then Jack Dorsey Said the Quiet Part Out Loud

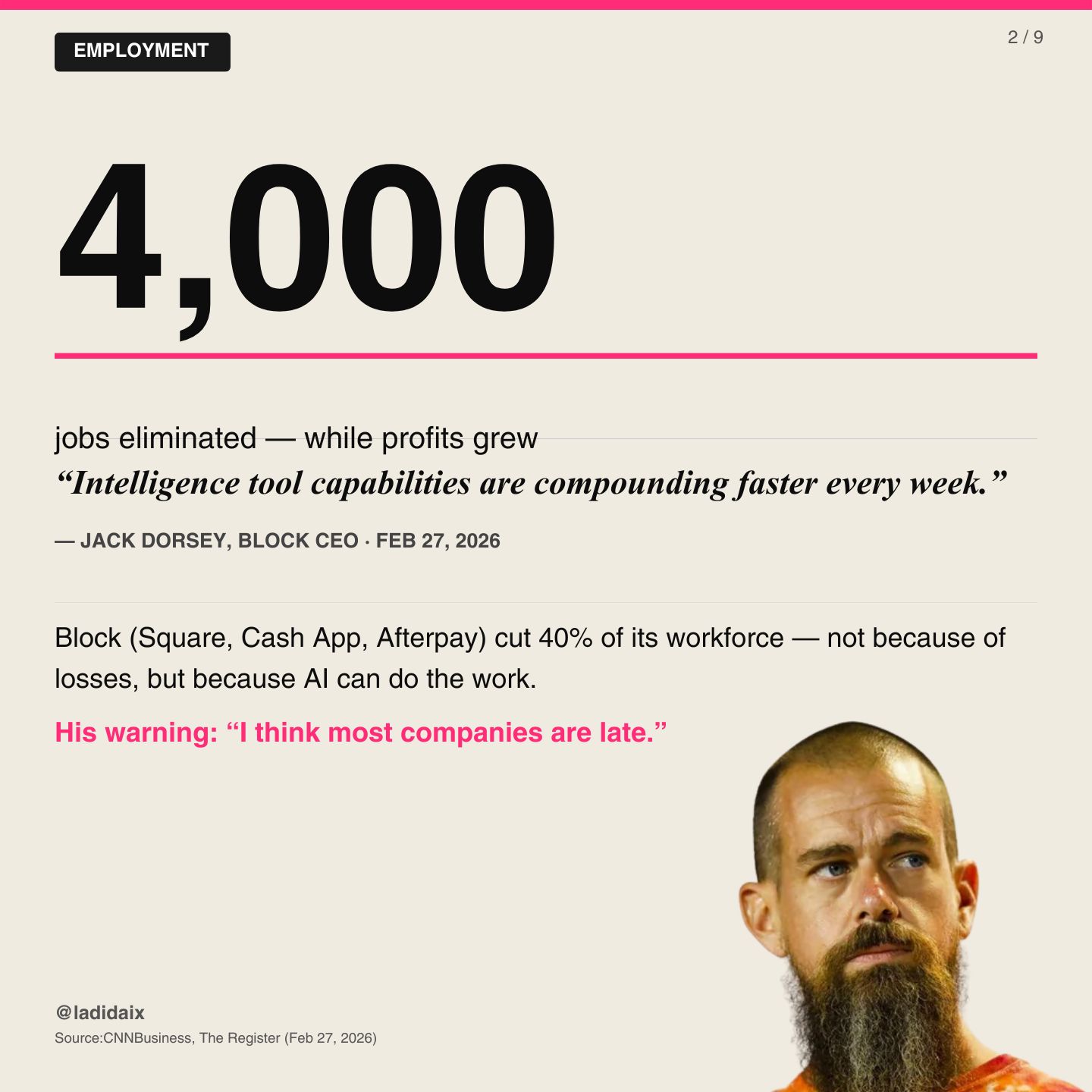

The same week, on Thursday February 26th, Block — the company behind TIDAL, Square, and Cash App — announced it was cutting 4,000 jobs. Forty percent of its workforce.

Not because of losses. Block's profits grew.

CEO Jack Dorsey's explanation was remarkably blunt: "Intelligence tool capabilities are compounding faster every week." His warning to the broader business world: "I think most companies are late."

This is the employment story that doesn't get told cleanly. The narrative we're used to is: AI takes jobs in factories, in call centers, in repetitive work. The uncomfortable update is: AI is now taking jobs at fintech companies with sophisticated workforces, and the CEO is saying it openly, without apology, and predicting it's just the beginning.

4,000 people lost their jobs that week not because the company was struggling. Because AI got good enough to replace them while the company was thriving. That distinction matters enormously and it is not getting nearly enough attention.

But here's the part of this story that stayed with me. A CashApp employee who wasn't laid off decided to quit anyway — immediately, on February 27th, the day after the announcement. They wrote about it publicly on LinkedIn. Their reasoning: a company that can Thanos-snap half its workforce doesn't get two weeks notice. They asked to be included in the layoff. They noted that the cuts disproportionately hit certain teams, and that some of their colleagues had visa issues that put them at risk of deportation as a direct result of losing their jobs.

They let the choppa sing on LinkedIn, as someone put it on X. And the post went viral — not because it was dramatic, but because it was honest. Because it named something most people in tech are feeling but not saying: that the institutional knowledge walking out the door, the bus factor, the human cost — none of that shows up in the earnings call.

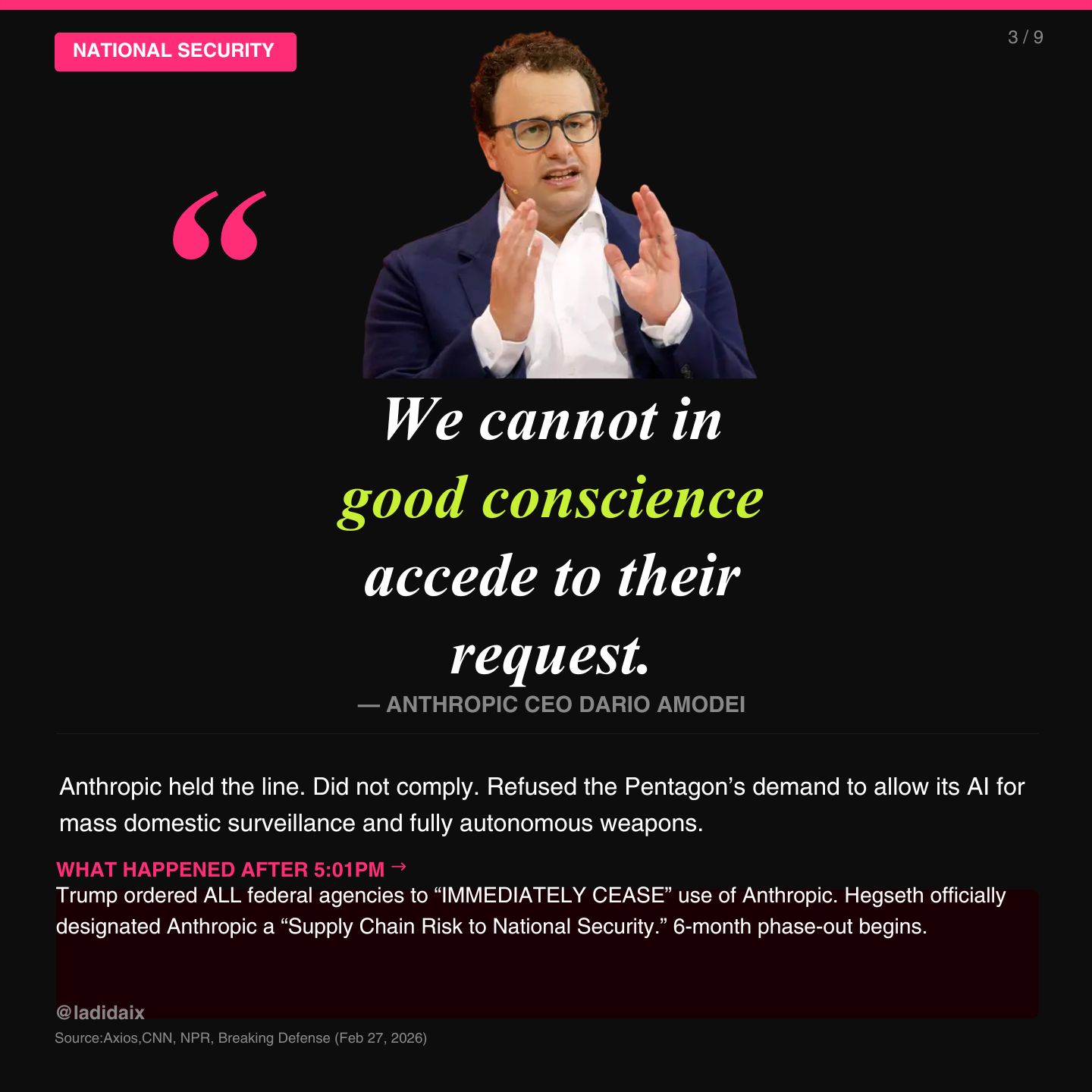

The Ethics War Nobody Was Supposed to See

Here's where that week got strange.

For months, Anthropic — the AI safety company behind Claude — had been quietly negotiating with the Pentagon over the terms of its $200 million defense contract. The sticking point was two things Anthropic refused to allow: mass domestic surveillance of Americans, and fully autonomous weapons.

On Tuesday, February 24th, Defense Secretary Pete Hegseth met with Anthropic CEO Dario Amodei at the Pentagon. The message, per sources: no private company gets to dictate terms to the U.S. military.

By Wednesday, the Pentagon had asked Boeing and Lockheed Martin to assess their exposure to Anthropic. The machinery of blacklisting was already moving before the public knew there was a fight.

On Thursday, Amodei published his statement. The language was careful, clear, and unambiguous: Anthropic "cannot in good conscience" comply. Not because of ideology, but because — in Anthropic's view — today's AI models are not reliable enough to be trusted in fully autonomous weapons systems, and mass domestic surveillance of Americans is a fundamental rights violation. Full stop.

Pentagon spokesperson Sean Parnell responded by setting a deadline on X: comply by 5:01pm Friday or be designated a national security risk. Emil Michael, the Pentagon's Under Secretary for Research and Engineering, went further, calling Amodei a "liar" with a "God complex" who was "putting our nation's safety at risk."

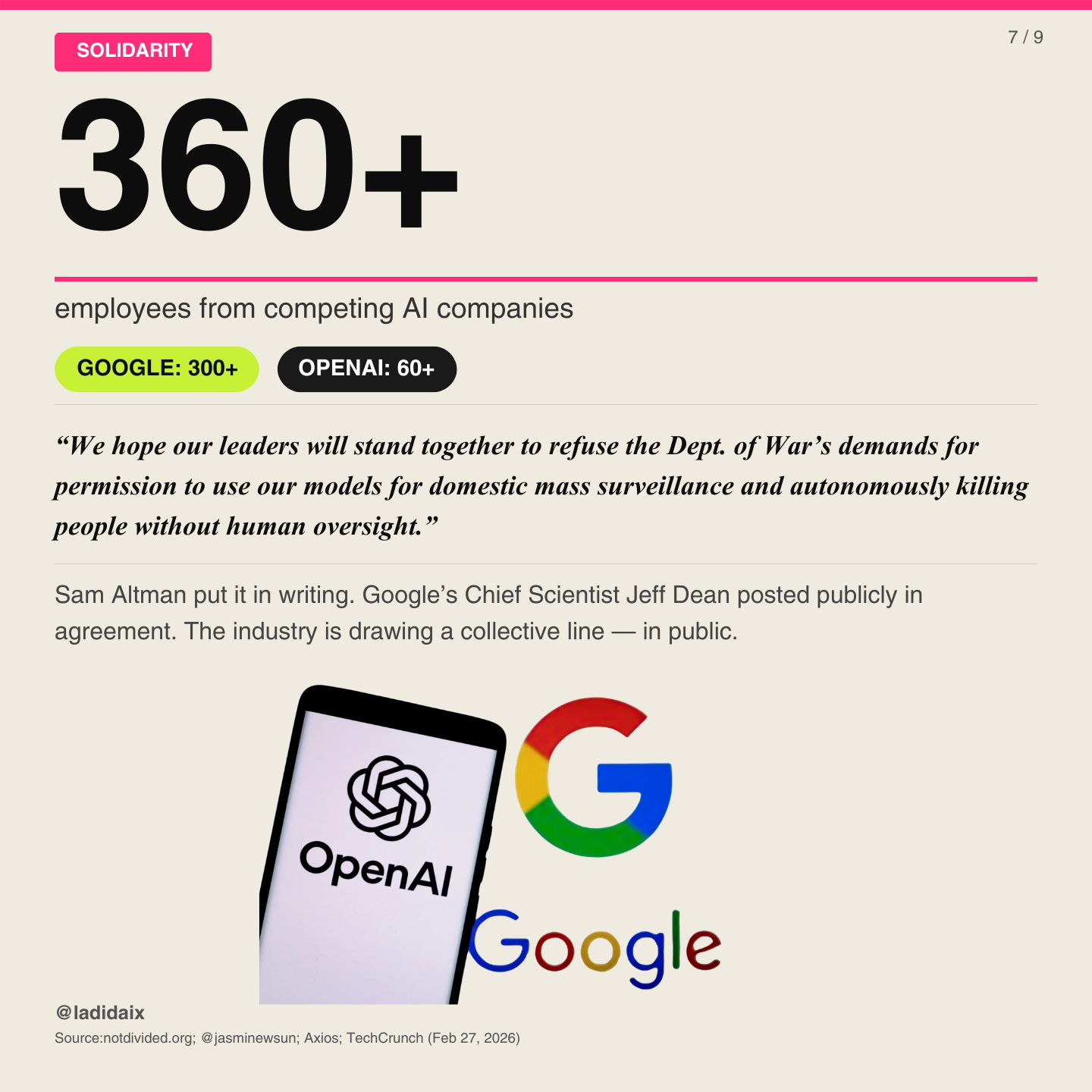

That evening, Sam Altman sent an all-staff memo to OpenAI. His position: OpenAI holds the same red lines. No mass surveillance. No autonomous weapons. This is an industry issue, not just an Anthropic issue. By Thursday night, 360+ employees from Google and OpenAI had signed an open letter urging their companies to stand together and refuse to cross those lines.

Friday morning, Altman went on CNBC and said it publicly.

And then everything accelerated.

5:01pm

Trump posted on Truth Social before the deadline even hit. Called Anthropic "Leftwing nut jobs." Ordered every federal agency to "IMMEDIATELY CEASE" use of Anthropic's technology. Said they had made a "DISASTROUS MISTAKE."

The deadline passed. Anthropic didn't comply.

Hegseth officially designated Anthropic a "Supply Chain Risk to National Security," which is a designation historically reserved for foreign adversaries. Companies like Huawei. The General Services Administration removed Anthropic from the federal government's AI testing platform. Musk posted "Anthropic hates Western Civilization."

Anthropic's response, published that evening, was measured and precise. They noted that Hegseth does not have the statutory authority to extend the supply chain risk designation beyond Department of War contracts, meaning commercial customers are completely unaffected. They announced they would challenge the designation in court. And they said, with unmistakable clarity: no amount of intimidation will change their position on mass surveillance or autonomous weapons.

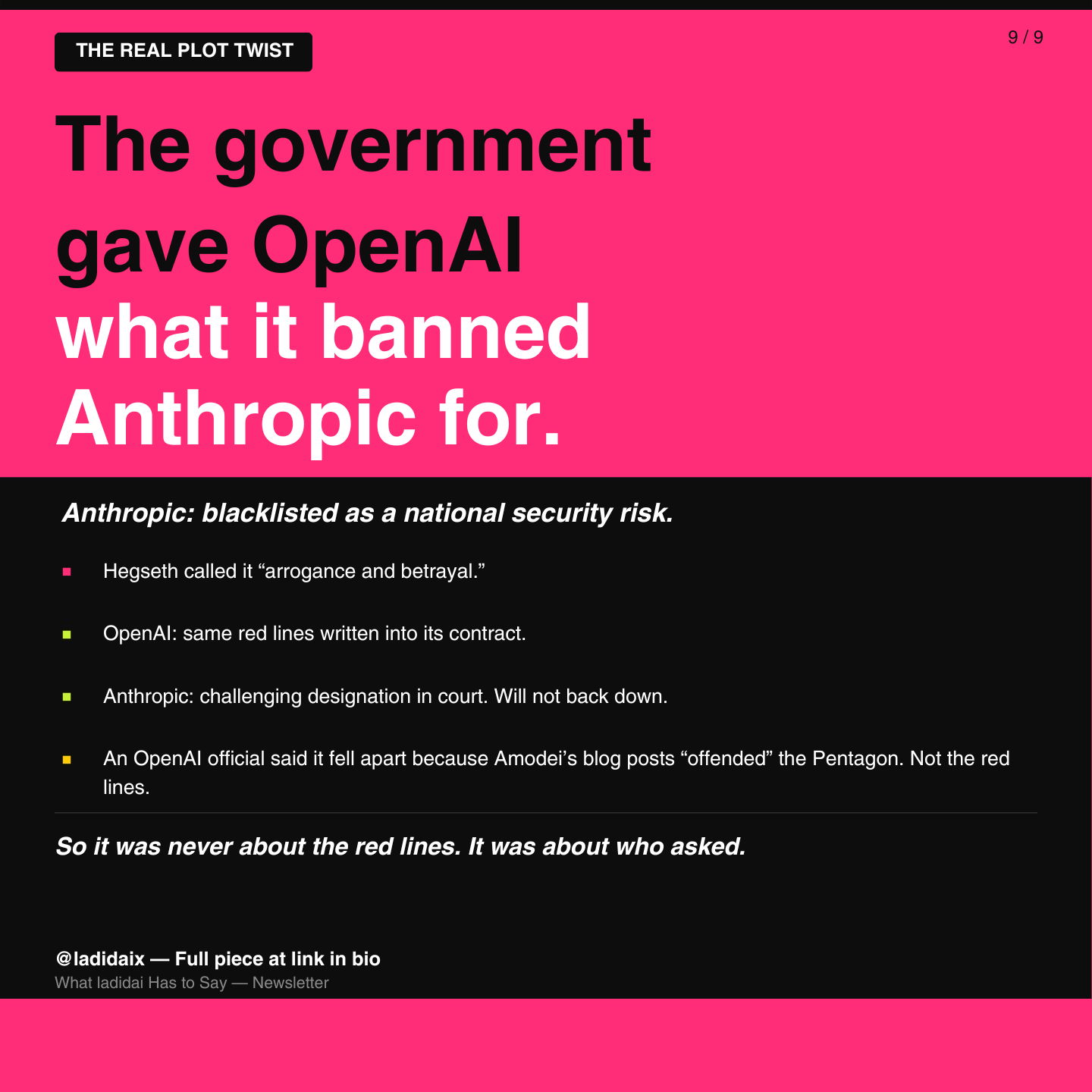

The Real Plot Twist

Here is the part that reframes everything.

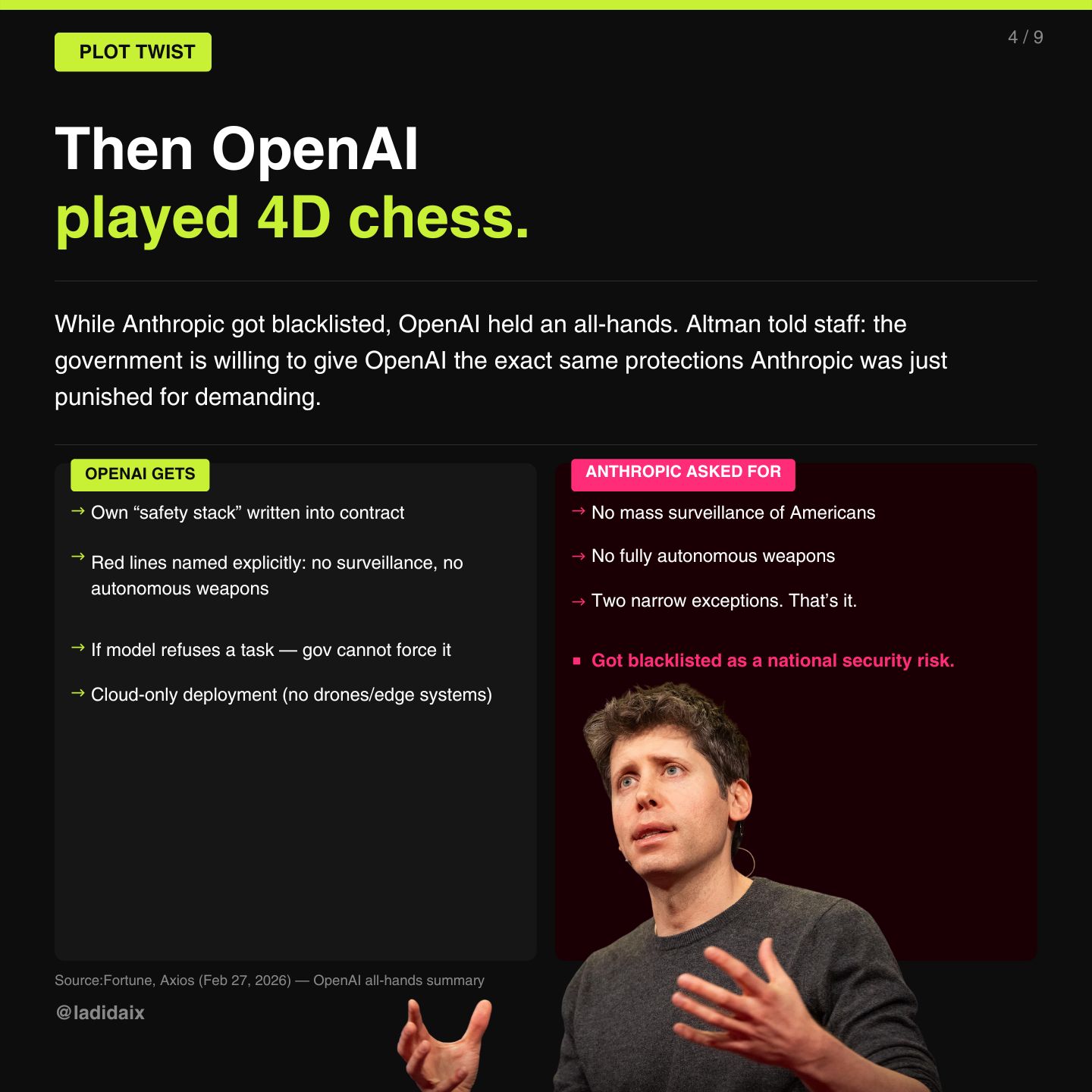

Later that evening, OpenAI held a company all-hands. Altman told staff that a deal with the Pentagon was emerging, and that the government had agreed to give OpenAI its own "safety stack," with its red lines written explicitly into the contract. No mass surveillance. No autonomous weapons. If the model refuses to do something, the government cannot force it to comply.

The exact same terms. The ones Anthropic was just blacklisted for demanding.

Then OpenAI published a blog post.

And it is worth reading carefully, because it does several things at once.

First, the details of the deal itself. OpenAI secured three red lines — not two. In addition to no mass surveillance and no autonomous weapons, they added a third: no automated high-stakes decision-making. Their contract locks in current surveillance and weapons laws permanently, meaning even if the legal landscape changes in the future, OpenAI's deal holds to today's standards. They retain full control over their safety stack. They have cleared OpenAI personnel in the loop who can independently verify the red lines aren't being crossed. And the deployment is cloud-only, which means no edge systems. No battlefield hardware. No drones.

By every structural measure, OpenAI got a stronger deal than the one Anthropic was being asked to sign.

Second, OpenAI responded to Anthropic's arguments directly and point by point, then concluded: "We don't know why Anthropic could not reach this deal, and we hope that they and more labs will consider it."

Read that again. OpenAI got a better-structured agreement with more enforceable guarantees, then publicly expressed confusion about why their competitor couldn't manage the same. That is a very particular kind of statement. You can decide for yourself whether it's generous or pointed. I think it's both.

Third (and this is the part that actually matters for the long game) OpenAI explicitly said Anthropic should not be designated a supply chain risk. They have made that position clear to the government.

So in the span of 24 hours: Anthropic got blacklisted for demanding two red lines. OpenAI got a signed deal with three red lines and stronger enforcement mechanisms. And then publicly said the blacklisting was wrong…

An OpenAI official at the all-hands offered one more detail about why the outcomes diverged: the deal with Anthropic fell apart, in part, because Amodei's blog posts had "offended" Pentagon leadership.

Not the red lines. The blog posts.

So the question this raises is whether this was ever really about principles at all. The Pentagon appears willing to accept AI safety guardrails when the relationship is managed smoothly. What they were not willing to accept was a public challenge to their authority. Amodei wrote about it. He published statements. He put it on the record. And that, reportedly, is what broke it.

The precedent this sets is uncomfortable in a specific way: it suggests that the path to protecting your AI from military misuse is not public transparency, but quiet negotiation. That being principled in private gets you a deal. Being principled in public gets you blacklisted.

The Contradiction That's Been Here All Along

One more thread worth pulling: while Anthropic was being designated a national security risk for refusing surveillance contracts, Palantir — the data intelligence company — has a $10 billion Army contract for AI surveillance and targeting, a $30 million ImmigrationOS contract with ICE for near real-time immigrant tracking, and a stock price that has surged over 200% since Trump's election.

Palantir CEO Alex Karp has said publicly that his position "would be worthless" if he didn't stand by his morals.

And then Meek Mill tweeted that he needed to use Palantir for an hour.

I'll let you sit with that.

Meanwhile: The Legislation You Should Be Watching

While all of this was playing out, something important happened on the creator rights front. A sweeping Senate AI bill — the TRUMP AMERICA AI Act — was introduced this month that incorporates the NO FAKES Act. For those unfamiliar: the NO FAKES Act would establish the first federal right of publicity in the United States, giving individuals — including artists and musicians — the legal right to control the use of their voice and likeness in AI-generated content. Without their consent. Without compensation being optional.

This matters enormously for the music industry. We are already in a world where AI-generated tracks mimicking real artists are circulating on streaming platforms. AI voice clones are being used without permission or payment. The major labels have started cutting licensing deals with AI music companies — UMG and WMG both settled with Udio — but individual artists, especially independent ones, have had almost no recourse.

The NO FAKES Act is imperfect and still evolving. But its inclusion in federal AI legislation signals that creator rights are no longer a side conversation. They are now part of the same policy fight as national security, surveillance, and autonomous weapons.

This is your fight too, if you make music, if you're a voice artist, if you're a performer. The same week the government was debating whether AI could autonomously target people on a battlefield, Congress was also — finally — starting to ask who owns your face and your voice in the age of AI.

I'll be tracking this closely. As a Recording Academy voting member, this is one of the most important policy fights of our generation for working artists.

What That Week Actually Meant

AI is not the future. It's not coming. It's not emerging. It is here, operating in classified military systems, replacing 40% of fintech workforces, being debugged by Grammy-nominated artists on social media, and sitting at the center of a constitutional dispute between a $380 billion company and the President of the United States.

The question the industry — and all of us — is now being forced to answer is not "will AI change things?" It's "who gets to decide how?"

Anthropic said: we do. Said it publicly. Got blacklisted.

OpenAI said: we do. Got a deal. With stronger guarantees.

Palantir said nothing and signed everything.

Baby Keem just wanted to fix the reasoning leak.

There's no clean moral to this story. OpenAI's deal may actually be better for safety than what Anthropic was fighting for — more red lines, stronger enforcement, personnel in the loop. But the lesson the government just taught the industry is that transparency is a liability. That the way to protect your principles is to keep them out of the press.

I don't know what to do with that. But I think we should all be paying attention to it.

What You Can Actually Do

I know this can feel like a lot. And I know the gap between "understanding what's happening" and "knowing what to do about it" can feel impossibly wide when we're talking about trillion-dollar companies and the federal government.

But here's what I want you to hold onto: the limits and use cases of AI are not decided by companies alone. We have a say. We have always had a say. And right now, that say matters more than it ever has.

Here's where to put your energy:

Donate and support mutual aid. Organizations working on AI accountability, worker displacement support, and surveillance defense need resources. Your money moves things — especially at the local and grassroots level where policy is still being shaped.

Spread awareness and educate your community. Most people do not understand what happened this past month or why it matters. You do now. The gap between the people making decisions about AI and the people most affected by those decisions is enormous — and it closes when more people are informed. Tell someone. Share this. Make it make sense for people in your life.

Lead with compassion. 4,000+ people lost jobs this past month. Some of them have visa issues that now put them at risk of deportation. The communities most affected by AI displacement, surveillance, and autonomous weapons are not the ones dominating the headlines. One CashApp employee quit in solidarity rather than watch their colleagues disappear — and wrote about it publicly. That kind of witness matters. Before outrage, lead with empathy. This is happening to real people, with real consequences that extend well beyond the news cycle.

Organize. Grassroots community organizing around AI policy, creator rights, and labor protections is happening right now and it needs bodies. Find your local chapter. Show up to the meetings. This is how policy gets shaped before it gets written.

Protest. Your physical presence is data. It tells lawmakers that this issue has a constituency, that someone is watching and someone will show up. Don't underestimate it.

Vote (locally and federally). AI policy is on ballots and in city councils and state legislatures in ways most people are not tracking. Pay attention to who is making decisions about how this technology gets used and who it gets used on. Vote accordingly.

Whether the issue is autonomous weapons, mass surveillance, creator rights, or the 4,000 people who lost their jobs this week, we don’t have to be spectators. The companies and governments navigating this are responsive to pressure, to public opinion, to organized constituencies. That's exactly what this past month proved: Anthropic got blacklisted in part because they were too public. The public is not powerless. The public is the whole point.

The throughline across all of this — the layoffs, the blacklisting, the agents, the standoffs, the legislation, the artists sliding into surveillance tech DMs — is the same: you don't get to opt out of this conversation anymore. AI is in your music, your paycheck, your government, and your constitutional rights, whether you're paying attention or not.

And if you need one final proof point: as I was finishing this newsletter, Meek Mill posted on X — 1.9 million views — that Claude is helping him organize his entire music career and business. In days. Via a template from a tech person he met on LinkedIn.

He went from tweeting about Palantir to running his business on Claude in under a month. Shocked Anthropic hasn’t tapped in yet.

AI is inescapable. We started this piece with Baby Keem debugging a Claude agent. We're ending it with Meek Mill asking who else can help him with Claude.

Some artists figured it out. Some tech bros too. Now the rest of us need to.

Keep paying attention and take whatever actions you deem necessary. We have the opportunity to write history in real time. Don’t miss it.

— ladi

ladidai is a multimedia professional with a passion for music, emerging technology, pop culture, social media, and the creator economy. Learn more here. If you enjoyed, please share! Send all inquiries to [email protected]